AMD’s launch occasion for its new MI300X AI platform was backed by a few of the largest names within the business, together with Microsoft, Meta, OpenAI and lots of extra. These huge three all mentioned they deliberate to make use of the chip.

AMD’s CEO Lisa Su bigged up MI300X, describing it as essentially the most complicated chip the corporate has ever produced. All informed, it accommodates 153 billion transistors, considerably greater than the 80 billion of Nvidia’s all-conquering H100 GPU.

It is also constructed from no fewer than 12 chiplets on a mixture of 5nm and 6nm nodes utilizing what AMD says is essentially the most superior packaging on the earth. The bottom layer of the chip is 4 huge IO dies together with Infinity cache, PCIe Gen 5, HBM interfaces, and Infinity Cloth. On prime of which are eight CDNA 3 accelerator chiplets or “XCDs” for a complete of 1.3 petaflops of uncooked FP16 compute energy.

Both facet of these stacked dies are eight HBM3 reminiscence modules for a complete of 192GB of reminiscence. So, yeah, this factor is a monster.

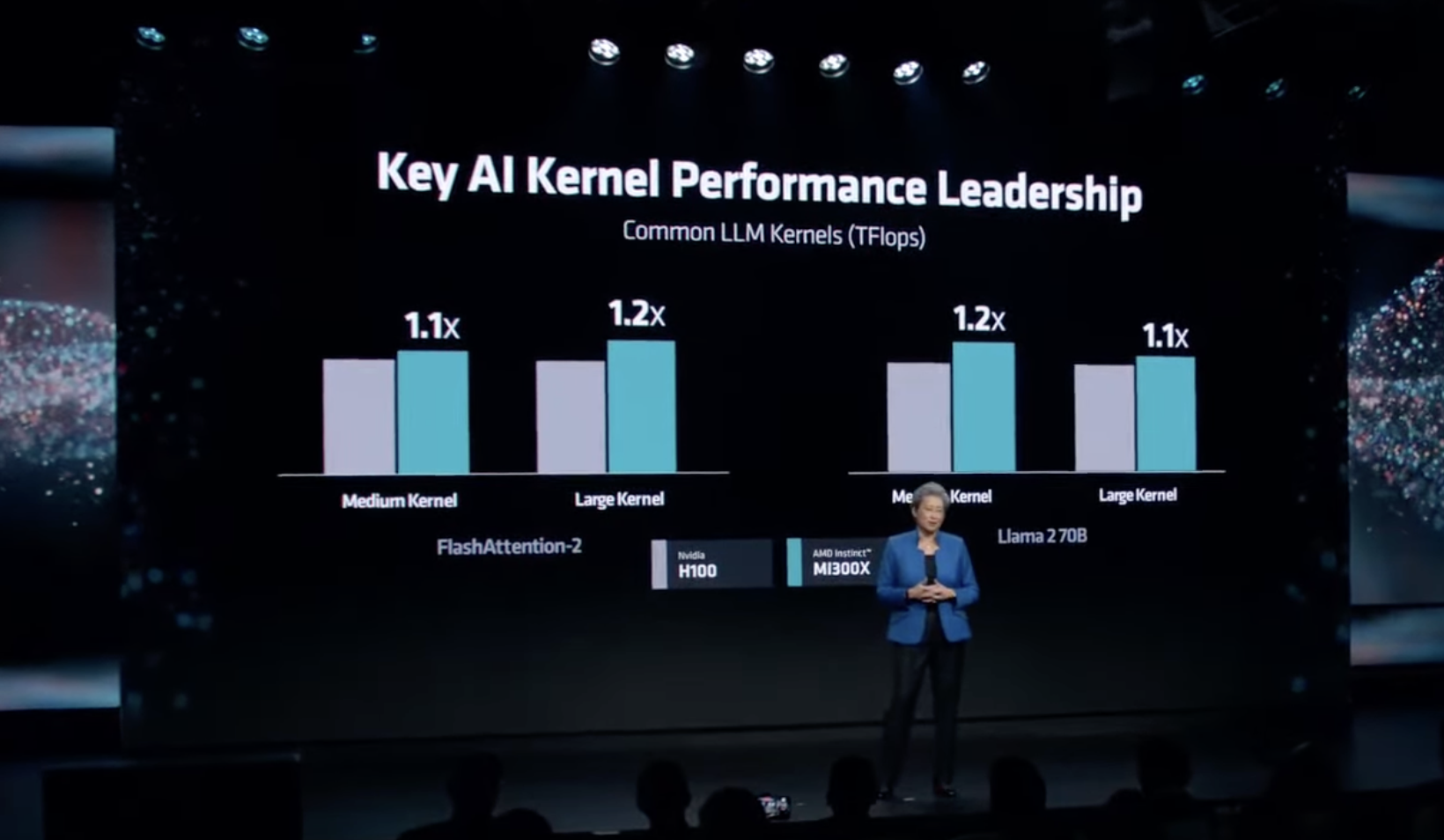

General AMD claims MI300X has 2.4 occasions the reminiscence capability, 1.6 occasions the reminiscence bandwidth and 1.3 occasions the uncooked compute energy of Nvidia’s H100. In precise AI coaching and inferencing benchmarks, AMD usually claims that MI300X is about 1.2 occasions sooner than H100.

In actuality, as long as MI300X is broadly aggressive on the {hardware} facet, the finer particulars of the way it compares most likely do not matter all that a lot. As a result of arguably extra vital would be the software program assist. Nvidia’s CUDA platform is much better supported to date than AMD’s ROCm, so the latter has a lot to show.

It is price noting that Nvidia employs extra software program engineers than {hardware} engineers, which speaks volumes about the place Nvidia locations worth and vital.

All that mentioned, AMD did emphasise that its reminiscence capability benefit implies that you are able to do much more MI300X than Nvidia’s H100. A totally constructed up server node of eight MI300X’s delivers 1.5TB of reminiscence to the 640GB of the equal Nvidia H100 GHX node.

How profitable MI300X will probably be very a lot stays to be seen. Whereas it is clearly promising to see the likes of Microsoft, Meta, OpenAI supporting AMD’s huge AI launch, we do not know the way chips they will every really be shopping for.

As we reported, it is estimated Microsoft alone purchased £5 billon price of Nvidia H100 GPUs in the newest accomplished quarter. What is not clear from this occasion is whether or not corporations like Microsoft plan to spend billions with AMD or if their involvement at this level is as a lot about maintaining Nvidia on it toes with the looks of willingness to purchase a competing product as it’s about really shopping for actually vital portions of AMD’s new GPU.

Equally, AMD is being very conservative about its predictions for income from MI300X. However does that mirror its true ambitions, or is it making an attempt to set expectations low after which smash by them? As ever, watch this area.

What any of this implies for PC gaming is equally if not much more foggy. If AMD could make severe cash on AI chips, then in idea that places it in a greater place to put money into gaming GPU know-how. Then again, it may also divert AMD’s consideration and certainly its entry to innovative manufacturing capability at its key foundry companion TSMC.

All of which implies we’ll simply have to attend and see the way it pans out. On the whole, we expect AMD doing effectively in AI will probably be a very good factor for PC players if it makes for a more healthy, stronger AMD. however equally there are such a lot of variables at play, it is fairly arduous to make sure. should you’re fascinated about discovering out extra, you’ll be able to watch the entire launch occasion right here.