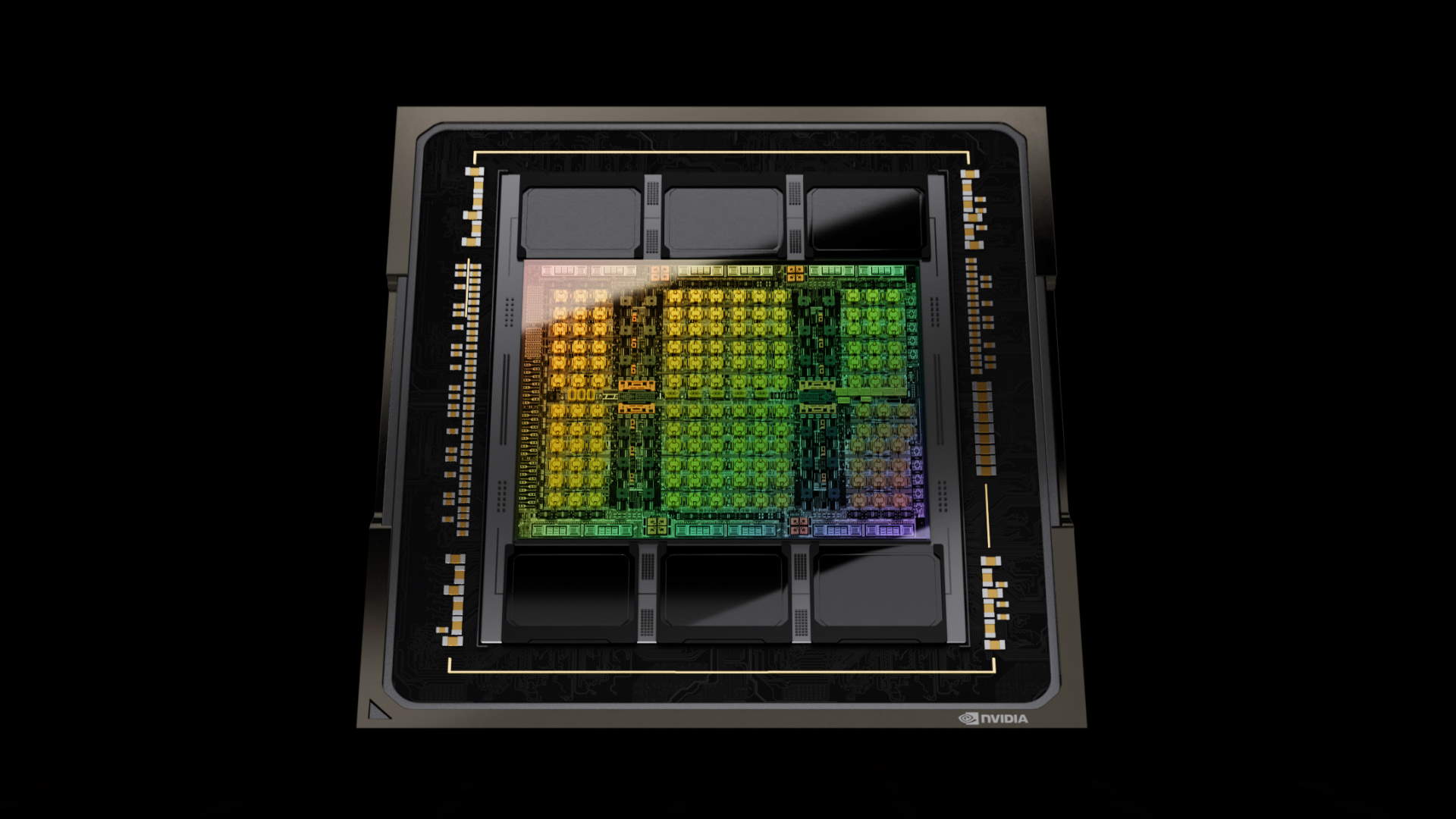

As you are most likely conscious, there’s an insatiable demand for AI and the chips it must run on. A lot so, Nvidia is now the world’s sixth largest firm by market capitalization, at $1.73 trillion {dollars} on the time of writing. It is displaying few indicators of slowing down, as even Nvidia is struggling to satisfy demand on this courageous new AI world. The cash printer goes brrrr.

With a purpose to streamline the design of its AI chips and enhance productiveness, Nvidia has developed a Giant Language Mannequin (LLM) it calls ChipNeMo. It basically harvests knowledge from Nvidia’s inner architectural info, paperwork and code to present it an understanding of most of its inner processes. It is an adaptation of Meta’s Llama 2 LLM.

It was first unveiled in October 2023 and based on the Wall Avenue Journal (by way of Enterprise Insider), suggestions has been promising to date. Reportedly, the system has confirmed helpful for coaching junior engineers, permitting them to entry knowledge, notes and knowledge by way of its chatbot.

By having its personal inner AI chatbot, knowledge is ready to be parsed rapidly, saving a number of time by negating the necessity to use conventional strategies like e mail or immediate messaging to entry sure knowledge and knowledge. Given the time it may well take for a response to an e mail, not to mention throughout totally different services and time zones, this technique is definitely delivering a fine addition to productiveness.

Nvidia is pressured to combat for entry to the perfect semiconductor nodes. It isn’t the one one opening the chequebooks for entry to TSMC’s innovative nodes. As demand soars, Nvidia is struggling to make sufficient chips. So, why purchase two when you are able to do the identical work with one? That goes an extended strategy to understanding why Nvidia is making an attempt to hurry up its personal inner processes. Each minute saved provides up, serving to it to deliver quicker merchandise to market sooner.

Issues like semiconductor designing and code growth are nice suits for AI LLMs. They’re capable of parse knowledge rapidly, and carry out time consuming duties like debugging and even simulations.

I discussed Meta earlier. In accordance with Mark Zuckerberg (by way of The Verge), Meta might have a stockpile of 600,000 GPUs by the top of 2024. That is a number of silicon, and Meta is only one firm. Throw the likes of Google, Microsoft and Amazon into the combo and it is simple to see why Nvidia desires to deliver its merchandise to market sooner. There’s mountains of cash to made.

Large tech apart, we’re a good distance from absolutely realizing the makes use of of edge primarily based AI in our own residence methods. One can think about AI that designs higher AI {hardware} and software program is simply going to turn out to be extra vital and prevalent. Barely scary, that.